If there’s one thing I know about humanity, it’s that we’re damn stubborn. And we love to break the rules. Take a look at aeroplanes for example, how on Earth did we figure those out? Well, the explanation is quite simple actually. We looked up and saw birds fly. “How do those fly?” we wondered. And more importantly, “Why can’t we do that?”. Well, after centuries – and countless iterations of similar-looking constructions – we figured it out, and took to the sky.

That’s what science is, really. Having an idea, and then throwing a bunch of stuff against the wall to see what sticks. And being really stubborn about it along the way. And now, we’re hard at work to recreate the ultimate machine: the human brain. So, let’s talk about AI Image Generation.

Stable Diffusion for dummies

First things first, I am far from an AI expert, but I do know the basics. So, how can an AI create images? AI has many different forms and applications but in this specific case, when talking about AI-generated images, we’re talking about ‘machine learning’. The kind of machine learning used for image generation – neural networks – strongly resembles how the human brain works. Just like studying vocabulary with the help of flashcards, you can feed a machine learning model both a problem ànd the solution to that problem. By doing trillions of operations and making small variations every time, it can give itself feedback on the result it came up with.

This means that the model it comes up with is very much like the human brain, in that nobody can understand exactly how it works. It’s not written in thousands of lines of code but consists of a neural network that allows it to solve problems by making the right connections. Now, keep in mind, this is an extremely oversimplified explanation, but it gives a very basic understanding of how the whole thing works.

Using this methodology and applying it to AI-generated images we can start to understand how the technology behind it (latent diffusion) came to be.

Removing noise

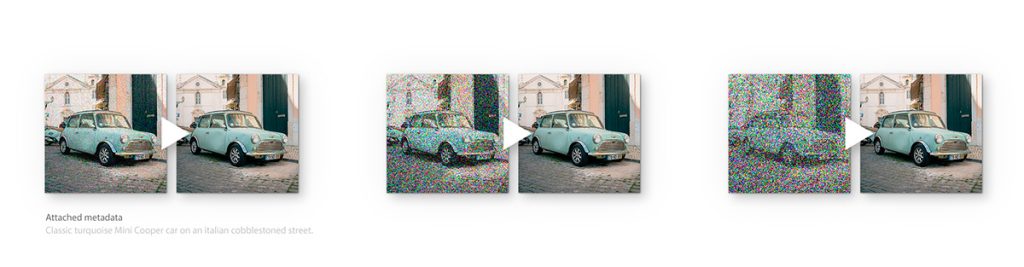

Let’s say you start with a picture and apply a layer of noise on top of it, with an opacity of 10%. There are already algorithms that can remove noise from an image, so you let the computer remove the noise until you have a clean image. Remember, it’s using the same principles here: did my iteration get closer or further away from the original input?

Once it figures out how to remove the noise and you have the output you were after, you up the difficulty. Increase the opacity of the noise layer to 20% and let it figure out how to remove the noise again. You keep doing this until you finally end up at 100%. At this point, the image below is gone. All the model has to start with is pure noise. Eventually, after a few hours of trial and error, it will figure out how to remove all the noise from that image, essentially inventing a whole new picture.

Now that you have a model that can ‘invent’ images, you combine this latent diffusion technology with something called Contrastive Language-Image Pre-Training, aka CLIP. CLIP is another AI model that can judge how well text matches an image. It enables the latent diffusion model to understand what it has to generate. Because otherwise, it has no idea what it’s generating. With the help of CLIP it can understand what a cat, a banana or a building is and what they should look like.

The result of this mashup is what we call ‘stable diffusion’.

Now, stay with me, we’re almost there. To understand what different types of cats, buildings, and cars look like, it has to be trained on a lot of images. And when I say a lot, I mean a lot. 5 billion, 512x512px images, to be exact. All with labels and URL sources.

The end result of all this training is a single file that compresses the entire visual knowledge of humanity into a few gigabytes.

Pretty neat trick, huh? But that trick now enables us to generate whatever we can imagine, just by typing a few words!

What’s the market doing with all of that?

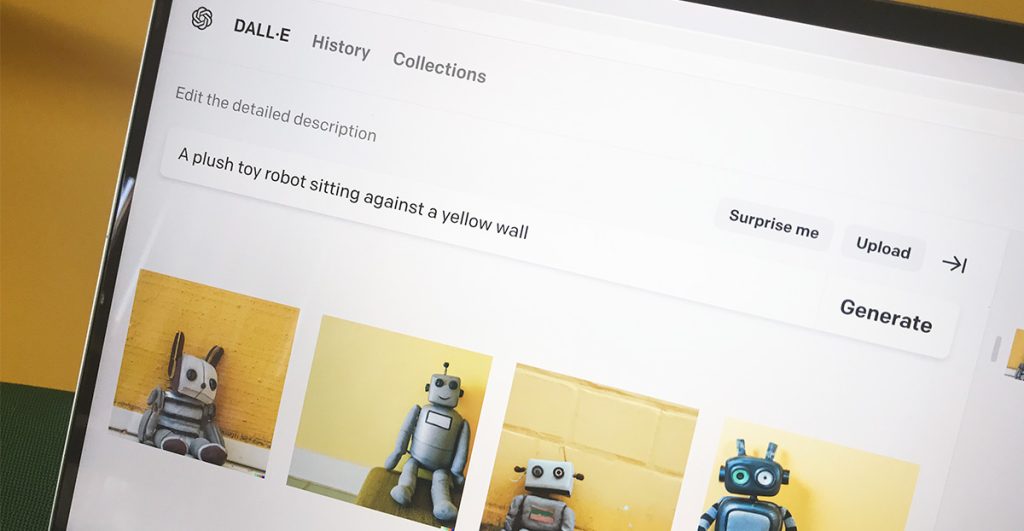

There are currently 2 major platforms where anyone can start to create with this technology. Dall-E 2 and Midjourney. These platforms use the same ‘stable diffusion’ technology, but made it user-friendly and added a monetization system. It has also been made ‘open source’, meaning you can run it on your own system (provided you have the hardware and the know-how). This also means thousands of developers worldwide are constantly adding features to it. That’s why this space is currently booming and moving much faster than any of us could have anticipated.

But what about my job?

Being able to generate tangible images at the speed of thought was something we could never have imagined. Now that it’s here, it can seem kind of scary at first. But let me make it a bit less scary and tell you why I think we have to embrace it and look at which benefits it brings us.

The goal of creating movies shouldn’t be sitting behind your computer for 5 days to create a 1, 5 second VFX shot of a building collapsing, for example. The goal should be to write a prompt saying: ‘a building collapsing’ and get 4 variations of that shot instantly. This is where the divide between believers and non-believers on AI comes in: an entire industry was built based on the creation of those VFX shots. They require years of experience, mastering multiple software packages and plain hard work. And all of that could be replaced with a simple text prompt? Who wouldn’t be scared?

Embracing AI

But like I said, it’s all about the mindset. How do you approach this technology? I say: embrace it, rather than fear it may replace you. Enjoy the benefits, let it fuel your creativity and use it to help you work faster and better. Because in the end, it’s just a tool. A tool that has been trained on all of our work. It doesn’t come up with new styles by itself – it can’t. It needs human-made art to learn from and it needs human input to write the right prompt to get the result you’re after (and trust me, that’s harder than you think).

We as humans always push the boundaries and strive for the next thing. We went from gliders to Space Shuttles. If you think this is the end of the road, don’t worry. We will find 3 new problems to solve now that we unlocked this technology… and 5 more problems after that.

Want to know more about the possibilities of AI? We’d love to help you out!